Discover How to Convert Hexadecimal Color Codes to SwiftUI Colors

Cara mudah mengubah kode heksadesimal warna menjadi Color di SwiftUI

When creating beautiful and visually appealing iOS applications, working with custom colors is an absolute necessity. Most UI/UX designers use tools like Figma, Sketch, or Adobe XD, and these tools typically export design specifications using hexadecimal (Hex) color codes. Unfortunately, SwiftUI does not natively provide a direct, built-in initializer to create a Color object straight from a hex string. This limitation forces developers to manually convert these codes or rely on helper functions.

In this article, we'll dive deep into what hexadecimal color codes are, why they are so prevalent in the design community, and most importantly, how to create a highly reusable Swift extension to seamlessly convert hex strings into SwiftUI Color objects.

What is a Hexadecimal Code?

Colors can be quickly written and communicated through color codes, either in RGB format or hexadecimal codes. For example, the standard color red can be represented in RGB as rgb(255, 0, 0) or in hexadecimal as #FF0000 or simply FF0000. Furthermore, it can include an alpha channel for transparency, resulting in an 8-character string like FF0000FF.

The hexadecimal code is essentially a base-16 mathematical system written in the format RRGGBB (Red, Green, Blue) or RRGGBBAA (Red, Green, Blue, Alpha). In an 8-character string, the "AA" represents the alpha value or opacity level. A value of "FF" means the color is 100% fully opaque, while "00" indicates that the color is fully transparent, rendering it completely invisible. Understanding this basic structure is the key to successfully converting the string into standard Double values used by SwiftUI.

The Conversion Challenge in SwiftUI

In older iOS development frameworks like UIKit, developers often created extensions for UIColor to handle hex values. SwiftUI introduced the declarative Color struct, but it requires standard Red, Green, and Blue values expressed as fractions between 0.0 and 1.0. This means taking our hex string, breaking it into its RGB components, converting those base-16 strings into base-10 integers (0-255), and finally dividing them by 255 to yield the required fractions.

Creating the Custom Color Extension

To convert a hexadecimal color string into a SwiftUI Color effortlessly, you can create a Swift extension for the Color struct. This makes the new initializer available globally across your Xcode project. Let's look at the implementation:

extension Color {

init?(hex: String) {

// Remove any non-alphanumeric characters like the hashtag (#)

let hex = hex.trimmingCharacters(in: CharacterSet.alphanumerics.inverted)

var int: UInt64 = 0

// Scan the string into a 64-bit integer

Scanner(string: hex).scanHexInt64(&int)

// Extract the R, G, B, and A components

let a, r, g, b: UInt64

switch hex.count {

case 6: // Standard RGB format (e.g., FF0000)

(r, g, b, a) = (int >> 16, int >> 8 & 0xFF, int & 0xFF, 255)

case 8: // RGB format with Alpha (e.g., FF0000FF)

(r, g, b, a) = (int >> 24, int >> 16 & 0xFF, int >> 8 & 0xFF, int & 0xFF)

default:

// Invalid format returns nil

return nil

}

// Initialize the standard SwiftUI Color using extracted values

self.init(

.sRGB,

red: Double(r) / 255,

green: Double(g) / 255,

blue: Double(b) / 255,

opacity: Double(a) / 255

)

}

}By using the bitwise shift (>>) and bitwise AND (&) operators, we can efficiently slice the 64-bit integer into the distinct color channels.

Real-World Usage Example

Once you have added the above extension to a utility file in your project, using it becomes exceptionally simple. You no longer have to define colors manually across multiple Views. Instead, you can inject the hex string directly where needed.

Here is an example demonstrating the application of our new extension to style a Text view's background:

struct ContentView: View {

var body: some View {

VStack {

Text("Lorem ipsum dolor sit amet, consectetur adipiscing elit. Aenean luctus, turpis tristique venenatis lobortis, leo magna posuere magna, ac varius arcu magna at turpis")

.foregroundColor(.white)

.padding()

// Using our custom hex initializer

.background(Color(hex: "F26800"))

.cornerRadius(10)

}

.padding()

}

}

Common Pitfalls and Gotchas

- Forgetting the Question Mark: Notice the

init?(hex:)signature. Because the string might be invalid (e.g., "NotAColor"), the initializer returns an Optional. You might need to provide a fallback color using the nil-coalescing operator, likeColor(hex: "XYZ") ?? Color.black. - Hashtag Issues: Real-world hex codes often begin with

#. The code provided uses.trimmingCharactersto safely strip out the hashtag so the scanner doesn't fail.

Conclusion

Working closely with designers demands precision in color matching. By extending the native SwiftUI Color struct to accept hexadecimal strings, you drastically bridge the gap between Figma mockups and working Swift code. It keeps your code base clean, robust, and significantly easier to maintain.

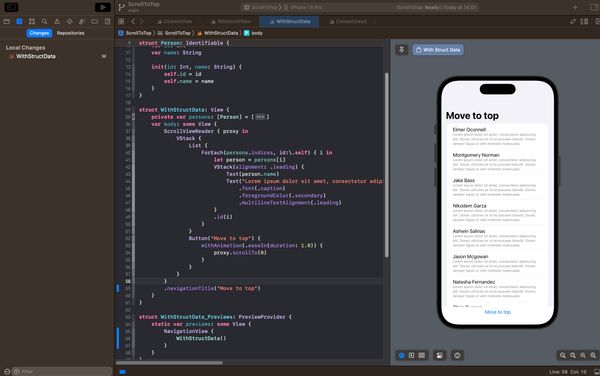

Below is another example in video format, illustrating the usage of the Color extension above with dynamic input from a user interface.